Also called split testing or bucket testing, A/B testing is a marketing research tool used by businesses to determine which version of a piece of content, A or B, appeals more to consumers. Ultimately, the option that yields more customer interaction is used going forward.

What Is A/B Testing And How To Use It

Written by: Victoria Yu

Victoria Yu is a Business Writer with expertise in Business Organization, Marketing, and Sales, holding a Bachelor’s Degree in Business Administration from the University of California, Irvine’s Paul Merage School of Business.

Edited by: Sallie Middlebrook

Sallie, holding a Ph.D. from Walden University, is an experienced writing coach and editor with a background in marketing. She has served roles in corporate communications and taught at institutions like the University of Florida.

Updated on November 28, 2025

As you develop and hone your marketing and sales strategies, you might have all the broad strokes figured out but sometimes hesitate on the smaller details. Would a “Swift Sales Closing” message be better, or “Next-Level Lead Nurturing”? Should you place this website button at the top or on the side? Do website visitors prefer a colder color scheme or a warmer one?

There are a thousand minute decisions that go into crafting an amazing, intuitive, and appealing customer experience online, and each one has the opportunity to make or break a potential sale. But as you know, most companies can’t afford to waste endless hours conducting endless customer needs assessments for every little detail.

Luckily, a handy marketing tool called A/B testing can save the day. This marketing research technique helps businesses decide which option or feature, A or B, appeals more to consumers. If deliberating over these hundreds of little choices are becoming the straws that might break your business’s back, then we’ve developed this guide just for you. It will help you understand what exactly A/B testing is, what situations you can use it in, and how to use it to finesse your business operations.

Key Takeaways

A/B testing is a research tool used to determine which of two versions of marketing content performs better with consumers or customers.

A/B testing is popular because it’s a low-cost method to gain insight into consumer sentiment for small details that don’t warrant full-breadth research.

There are two types of A/B testing, between-subject and within-subject testing. Both have their own advantages and disadvantages for different situations.

There are five steps to conducting an A/B test: setting your variables, operationalizing your details, running the test, analyzing the results, and implementing the necessary changes.

What Is A/B Testing?

It’s important to note that rather than simply being used to decide which content version to use during the development stage, A/B testing can be done at any point in the marketing tool’s lifetime – even post-launch. Thus, A/B testing helps a company continuously tweak its customer experience and marketing content, staying on top of constantly shifting customer preferences and enhancing customer satisfaction in the long term.

A/B testing can be used for almost any type of customer-facing content, but some of the most popular use cases for it are:

- Websites

- UX and UI elements, website design, headlines, images, lead magnet forms

- Emails

- Subject headline, calls to action, greetings, body copy, body length

- Advertisements

- Images, fonts and colors, calls to action, placement, duration length

- Mobile Apps

- UX and UI elements, navigation, colors and fonts, image sizes, pop-ups

Why Is A/B Testing Important?

Now, you might wonder: why go through all this fuss to conduct behind-the-scenes testing? Wouldn’t it be better to simply ask the customer which of the two versions they’d prefer?

Though it’s true that it’s best to ask customers directly for their broader opinions and preferences on the company and product, customers often don’t want to be bothered when it comes to smaller details on marketing materials. Think about it: when was the last time you critically thought about the subject lines of the promotional emails companies sent you? Probably never. But even though customers don’t have a solid top-of-mind opinion on minor marketing details, they’ll undoubtedly notice and subconsciously react if it’s not good enough.

Not only would conducting a full-blown market research survey return ambiguous and inconclusive results, but it would also be quite expensive to run every single time the company needed to check customer opinions. On the other hand, A/B testing is quite cheap: all you need to do is make two subtly different versions of the same content.

And speaking of money, companies would be wise to use A/B testing to improve their online marketing tools. According to the US Department of Commerce, e-commerce sales in the first quarter of 2023 was $272.6 billion. And that’s just the first quarter! Statista predicts that e-commerce revenue will reach $940 billion by the end of the year, and will only continue to grow every year, forecasting almost $1.5 trillion in revenue by 2027.

In other words, e-commerce and digital selling are shaping up to be a lucrative market. No matter what industry you operate in or who you sell to, improving your digital marketing channels can only benefit you in the long term by helping you snatch a little more of the market pie.

As such, A/B testing is a great way for companies to discreetly, conclusively, and cheaply optimize these details without pestering consumers, improving bottom lines and revenue brought in from digital marketing channels.

Types of A/B Testing

Before we get into how to conduct A/B testing, the first thing you should know is that there are actually two forms of A/B testing: between-subject A/B testing and within-subject A/B testing.

1. Between-Subject A/B Testing

Between-subject A/B testing randomly divides people into two groups, exposing each group to either option A or B. Which option is better is determined by which group sees a higher number of conversions or desired actions by visitors or recipients. This is likely the option you’ll think of when you hear the basic premise of A/B testing.

For example, you could randomly divide your email list into two groups, and send two marketing emails that are the same except for the subject line. If you see a notably higher open rate, click-through rate, or response rate from group one, you’ll know to create subject lines similar to the successful one in your later emails.

2. Within-Subject A/B Testing

Within-subject A/B testing exposes the same group of people to both options A and B, asks them to complete the same goal for both, and observes the respondents’ performance between the two. As testing participants are told beforehand that they’ll be exposed to both, this gives a little more informed consent to participants.

For example, if you and a competitor sold a similar product, you could ask a member of your target audience to purchase the product on your website and then ask them to purchase the similar product on the competitor’s website. If the participant completed their purchase notably faster on the competitor’s website, that would signal to you that you need to improve your own website design.

3. Which One Is Better?

There are pros and cons to both between- and within-subject testing. Which one you choose should depend on the specifics of what you’re testing.

On one hand, between-subject testing sessions are often shorter and easier to set up, as you simply need to direct participants to one of the two versions. However, they require more participants than within-subject tests, as you’ll need a viable sample size for both versions of the test.

On the other hand, within-subject tests require fewer participants, as the same participant can be counted for both tests. They also minimize random noise factors such as a participant’s mood – if a person is happy or grumpy when testing one version, they’ll probably be happy or grumpy when testing the other. However, within-subject tests require more time to set up for each test, as the order of versions must be randomized for each participant. Depending on the test being conducted, there’s also a risk of knowledge transfer between sessions.

For example, when testing navigation between two similar website pages from the same company, the participant will become familiar with the general layout from the first version. Naturally, their navigation through the second version will be much faster.

If you’re strapped for time and have a lot of participants to draw from, we’d recommend you conduct between-subject testing. Otherwise, if you can afford to take more time, have fewer participants, and want to draw more conclusive results, we’d recommend you conduct within-subject testing.

How to Conduct A/B Testing

Now that you know what A/B testing is, how exactly do you implement it yourself? Here’s a five-step guide to accurately conducting A/B testing for your own business.

1. Set Your Variables

First of all, what exactly are you going to test? What outcomes are you hoping to achieve? These form the independent and dependent variables for your test.

Though you might have several elements of your marketing content that you want to optimize, testing each element one at a time conclusively tells your company that the independent variable you choose causes whatever change emerges. Then, the dependent variable you set should be something that’s immediately affected by the independent variable you set.

You may even go one step further and set a concrete hypothesis, saying that you suspect the dependent variable will change by, say, at least 10%. You might use this as a guideline for action: if the dependent variable doesn’t change by a drastic amount for your company, it may not be worth it for your business to take action.

For example, say your company sends monthly newsletters to potential customers. Some elements you could test are the subject line, the greeting line, the text length, the images, the appeal technique, and the call to action. Your emails unfortunately haven’t been seeing much success in generating customers, so you decide to change the text length (independent variable) to see if it will improve email performance (dependent variable).

2. Operationalize

Now that you have the broad strokes of your test, it’s time to flesh out each variable, designate your A version and B version, and hammer out the finer details of how you’re going to conduct the test. This process is called operationalization.

Going back to our example, we originally set the length of our email as our independent variable. Our current emails clock in at around 600 words of text. This length would be our control version A. Then, our B version could be emails of around 200 words. Finally, we could decide that our dependent variable will be the click-through rate, or the number of readers who click a link from the email to our website.

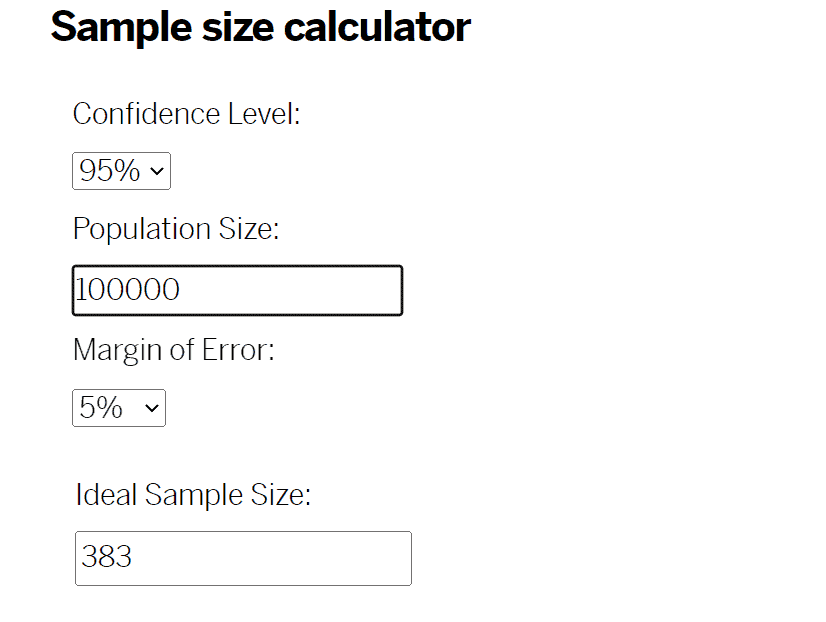

This is also the step where you should choose whether to use between- or within-subject testing, determine the duration of your experiment, set your sample size, and decide your confidence threshold. If you’re not familiar with these terms, we’ve just linked to tools and guides from Qualtrics and Analytical Toolkits to help you determine what each of these figures will need to be.

3. Conduct the A/B Test

With all of the fine details and goals determined, all that’s left is to run the test itself.

But of course, depending on the type of content you’re testing, it can be difficult and messy to manually track which potential customer saw which version of your content. That’s why most businesses use A/B testing software tools such as Convert for website testing or Campaign Monitor for email testing.

Remember: when you’re running your A/B test, it’s important not to change anything else about your content, lest you skew the results. You should also be sure to run both versions A and B simultaneously. If you wait between running version A and version B, a new variable, such as changing market conditions, may arise and also skew the results. The only time when you would run A and B at separate times is if your independent variable was time itself, such as the best time to make a cold call.

How long you run your A/B test will depend on how large you set your sample size and how much traffic you normally get with your marketing content. As we mentioned before, within-subject tests will also take half the time as between-subject tests because participants will be counted for both variations.

Back to our email campaign example, we might use a tool in our email automation software to run our A/B test. We might determine that there are 100,000 people in our target market, so according to Qualtrics’ sample size calculator, we should stop after collecting at least 766 —383 times two— responses for a result we’re 95% confident in that has a 5% margin of error.

4. Analyze the Data

Once you’ve collected enough data points, you can close out your A/B test and begin analyzing the degree to which the dependent variable has changed between the two.

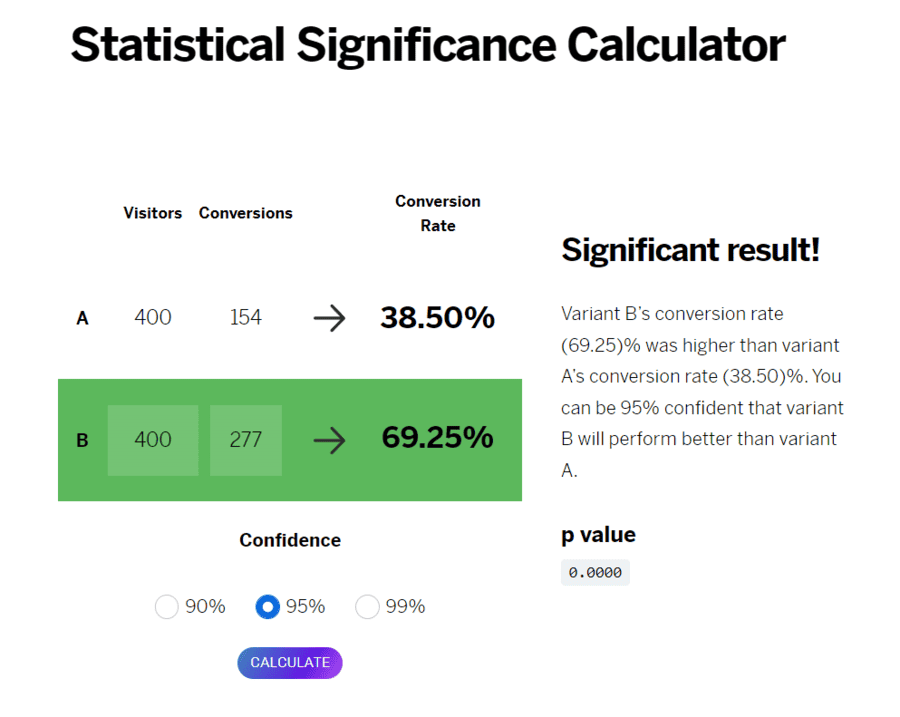

Using the number of participants and successful conversions in each version, you can determine whether the difference between A and B’s results were statistically significant or not. Qualtrics has a free statistical significance calculator you can use to help you make this determination.

Let’s say that we collected a nice, even 800 results – 400 each for versions A and B, respectively. We would put those figures and the number of click-throughs for each into the calculator to determine the conversion rate to see if there was a significant difference in the results between versions A and B.

Just from the near-doubled conversion rates, we can tell that version B, our shorter-length emails, performed much better than their longer A alternatives. This proves our hypothesis correct—the click-through rate did indeedincrease by at least 10%.

5. Implement the Results

With conclusive proof of which content, version A or B, performs better, all that’s left is to use those results to plan your next marketing strategies. You might discover that the new content, version B, performs better, and immediately tell your marketing team to develop content following those standards.

But even if your results prove that what you already have, version A, works better, that doesn’t mean the test was worthless. Instead, you should take it to mean that your business is already doing a good job using the best marketing tactics, and you should conclusively avoid any future plans that utilize elements of version B.

Solid in the knowledge that our shorter emails provide more click-throughs than our longer emails, our example company would immediately mandate the entire marketing department to change their email templates, shortening copy text to only about 200 words. We would then surely draw in more website traffic from emails, and if all goes well, see an uptick in paying customers down the line.

Conclusion

For those who haven’t looked at statistics since high school, A/B testing can seem fairly complex. But as a low-cost tool to continuously improve your marketing campaigns, its importance to growing businesses can’t be understated. By following these five easy steps, you can be well on your way to improving your websites, emails, ads, and more, making progress for your company one test at a time.

FAQs

What are some other ways I can use A/B testing?

While we focused primarily on how a company could use A/B testing to hone and optimize two versions of its own products, A/B testing can also be used to compare one company’s content, such as a website, to another company’s website. Of course, you’ll have to conduct within-group A/B testing as you won’t have developer access to another company’s website.

You could also implement the methodology of A/B testing in other parts of your company, such as sales and customer service. For example, you could have sales representatives try out two different greetings for customers, or service agents attempt two different explanations for a product fix. A/B testing is quite versatile, so feel free to experiment with it in all aspects of your company.

What are some other ways to determine customer sentiment?

As we mentioned before, A/B testing should only be used for small, minute details. If you’re unsure of broader questions such as what communication channels or appeals to use in the first place, you should instead conduct a consumer needs assessment with more complex tools such as surveys, observations, interviews, or two-factor experiments. These will give you more in-depth and qualitative information.

What are some best practices for conducting A/B testing?

When determining the dependent variable for your A/B test, it’s important to choose a KPI that accurately reflects changes in your independent variable. For example, if you were testing the ease of navigation of your website, it wouldn’t make sense to use customer acquisition as your goal metric – instead, you would choose something like average time it takes to complete a purchase. You want to pick something that reflects changes in your customer’s opinions.

Additionally, make sure you’ve aligned marketing and sales and told other employees down the line that customers might come in with two slightly-different-but-nearly-identical customer journeys. Otherwise, sales reps might be caught off-guard when customers have a different marketing message or experience than what they expected. No need to create a Mandela Effect, or convince your employees they’ve been teleported to an alternate universe!